Keeping up with the overwhelming pace of AI innovation

Or, what increasingly feels like we're living life inside of an exponential function

It has continued to be a wild few weeks in the world of AI. I spoke a few weeks ago about humanity’s challenges in understanding the exponential function. It’s fittingly ironic that I now find myself shocked at the amount of progress we’ve seen in the last few weeks. As the first quarter of 2023 comes to a close, I thought it might be a good time to reflect on what’s happened in the AI world so far. But like all good stories, I think it’s best to try to start at the beginning.

Party like it’s 1945

While mechanical computers had been around since the early 1800’s, most historians agree that the first digital computers were invented in the 1940’s. This represented the first true step towards the development of artificial intelligence, though it might’ve been hard to see it at the time. We’ll come back to that.

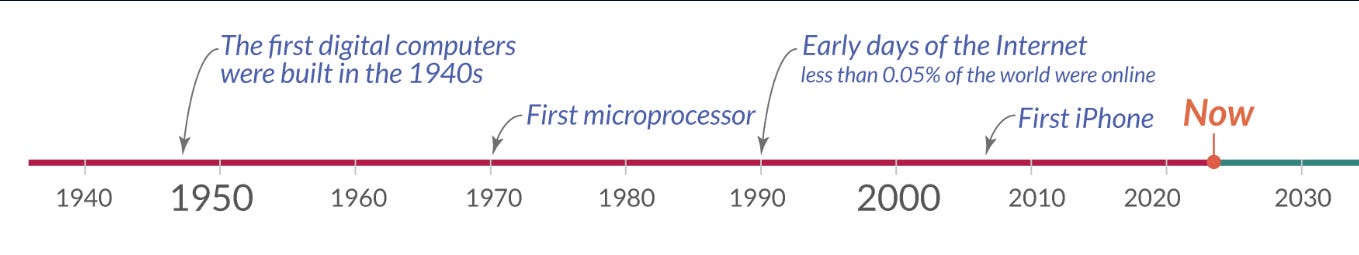

The invention of the digital computer also represented the first true “platform shift” of the last century (pre-dated by events such as the industrial revolution, the discovery of electricity, and the invention of the printing press). While historically these generational discoveries occurred ~once per century, in the last 100 years, they seem to have emerged roughly once every 20 years (charts provided by Our World in Data):

In the modern capitalist era, all of the above events represent the beginnings of technological paradigms that defined how business was done for the next two decades: the rise of computing, the emergence of the personal computer, the birth of the internet, and the shift to mobile. All of these events coincided with massive explosions of worker productivity, disruptions of incumbent businesses, and the emergences of new, generational companies.

These are the golden eras of innovation that venture capital was built for, wherein startups ride the wave of exponential adoption to build new platforms that solve problems previously thought to be unsolvable. I think it’s safe to say the adoption of AI has shown early promise of delivering the next era.

The 80 year overnight success

As noted above, the development of modern AI really began in the 1940’s, but the rise of AI certainly wasn’t a straight line. Inherently, AI is hard. We still don’t really know how the human brain works, so trying to understand how to replicate it has often been seen as a fool’s errand. As the below chart shows, breakthroughs had been few and far between, with multiple periods of “AI winters” sprinkled in:

When asked about the development of Modern AI, I typically point to the work completed by University of Toronto professor Geoff Hinton, often referred to as the “godfather of AI”. Beginning with his work on backpropagation in the 1980’s, Geoff and his team popularized the technique of “Deep Learning” (using massive amounts of data to train a type of AI called a neural net), culminating in the unveiling of a neural net dubbed “AlexNet” in 2012.

AlexNet is notable in that it represented the first human-level “intelligence” in an AI (in this case, in image recognition). While all AlexNet could do at the time was identify images and classify them as containing dogs or cats, it was the first to do so at a human level. After decades of naysayers claiming that real AI was an impossibility, the scientific community had been reinspired that it was more than just a dream.

Up until that point, compute usage in AI had roughly followed the trajectory of Moore’s Law (doubling every two years). Afterwards, however, it began to significantly exceed that, and followed a path that appeared to be much more exponential in nature, as more and more believers entered the field. This acceleration of interest in AI was the catalyst that led to the development of the most recent breakthroughs we’ve seen in the past few years.

Accelerating compute budgets are not interesting in isolation, though. When looking at what they’ve enabled in recent years, I often point to the below chart which outlines how breakthroughs in AI have related to developments in the realm of language:

Needless to say, the results are astounding. Today, AI is effectively better at every single language task than the average human. Perhaps most interestingly, the developments occurred incredibly rapidly in the last few years as the exponential growth of AI development came into effect. One can only imagine what we’re in store for over the next few years.

Okay Speed Racer: it’s getting faster. What now?

Given the rapid acceleration of developments in AI, it may no longer be feasible to speak about breakthroughs in terms of years, and instead we’re not looking at major developments in terms of weeks or days. To exemplify this, below is a non-exhaustive list of significant AI breakthroughs in Q1 of 2023 alone:

February 2nd, 2023: ChatGPT reaches 100 million users, making it the fastest adopted product in human history (notably, the second fastest instance was TikTok, another AI-first product)

February 22nd and March 8th, 2023: Notion and Hubspot each release their own AI-powered product offerings, in remarkably fast reactions to the disruptive threat to their businesses of ChatGPT

March 9th, 2023: LangChain becomes the fastest Open Source project to reach 10,000 GitHub stars in the AI era (ever?) after just 5 months. See below chart for the comparison to HuggingFace (10 months) and PyTorch (18 months)

March 14th, 2023: OpenAI releases GPT-4, their first multimodal language model. This greatly expanded GPT’s context window, allowing for far better performance against niche asks (and therefore significantly reducing the need for fine tuning of Language Models)

March 21st, 2023: Bill Gates publicly releases his essay declaring that “The Age of AI has begun”, certainly lending support to the idea that AI will represent the next major technology platform shift

March 22nd, 2023: Microsoft releases a research paper titled “Sparks of Artificial Generational Intelligence”, suggesting that GPT-4 represents the first true step towards the holy grail of AI, Artificial General Intelligence (AGI)

Unsurprisingly, Gary Marcus quickly came out on his substack arguing that the paper was both a “press release masquerading as science” and that Microsoft and OpenAI were in a position to “end democracy”

March 23rd, 2023: OpenAI releases ChatGPT plugins, less than 10 days after GPT-4 is released, offering tools for other businesses to quickly integrate into OpenAI’s platform (loosely analogous to an “app store for GPT”).

Notably, this may have wiped out an entire Tooling layer of startups building on top of Foundation Models, which has been the single hottest category in all of VC over the last quarter (talk about disruption!)

For those of you who are interested, I highly recommend watching the ChatGPT plugins demo in the link above, which certainly feels like the beginning of a new technology paradigm

ALSO March 23rd, 2023: TikTok CEO Shou Zi Chew testifies in front of US regulators, in an attempt to prevent an outright ban of the product given national security concerns. This represents the first major “AI national security” case that has reached US congress, but it certainly won’t be the last

Obviously, the pace of “breakthroughs” seems to have accelerated to the point where there’s a new development in the space every single day.

Key Takeaways

Given the raw pace of innovation in the space, it’s challenging to be truly prescriptive in times like these. Instead, I’ve listed a few thoughts that are top of mind for me right now:

Clearly, no business is safe at this point. In every single Board Meeting in 2023, every Fortune 500 CEO is being asked about what their AI strategy will be, and I’m not sure most of them will have good answers. That’s a big deal

Incumbents are reacting faster than ever. Notion and HubSpot were mentioned above, but Zapier, Expedia, Instacart, and Kayak were amongst the first to build ChatGPT plugins. Perhaps because many of these companies were born in periods of disruption, they’re more fearful of it. If nothing else, it feels like mature businesses are more aware of the Innovators Dilemma than ever before

Both investors and Engineers feel paralyzed in this moment. Who’s to say that OpenAI or an incumbent isn’t working on this specific problem at this very moment? How far away is a press release that will invalidate what you’re currently working on? Given the pace of innovation, it certainly feels like our discussion last week on economic moats is more important than ever

While later stage investors are paralyzed, Pre-Seed and Seed rounds in AI are getting done faster than ever as early stage investors are looking to purchase lottery tickets with asymmetric upside. The “spray and pray” strategy used by many angel investors feels like it might actually be a valid one in this moment

Increasingly, I’m becoming more and more concerned about the mismatch between the pace of innovation in the space and the pace of regulatory reaction. Our institutions were created to be methodical and intentional in their decision making, but how does that hold up in the face of exponential technological innovation?

Global regulators took more than a decade to react to the emergence of social media companies, and even then it felt like regulators had dated understandings of how those companies operated. What happens this time?

I would note that I worry about both overreactions and underreactions here. Surely, the ethical implications of AI need to be regulated in some capacity. But, an overcorrection (e.g., banning of AI) would be equally dangerous in an era in which AI becomes the critical technology layer of the future. More than ever, there feels like a need for significant collaboration between the public and private sectors

As always, I’d love to hear from readers on what their thoughts are on the current situation and opportunity set. Thanks for reading!

I wanted to share an anecdote. I know of a company that was working with AI to gather company IP and answer questions about it using a language model. They have been in this space for around 7 years already. They were really well ahead of their time and should have moats... but unfortunately Microsoft and Google integration that was recently announced is sure to wipe them off the board because of 2 key points: They have access to better models trained using hundreds of thousands of dollars to start from, and they can flip a switch and give it away for free as a way to attract customers. It's fascinating to see that amount of forethought be put to shame. Warren Buffett is credited as saying: at the turn of the last century there were tons of car companies all competing to be the best, but almost all of them went bankrupt where ford succeeded. Knowing the next innovation wave doesn't mean you have the know-with-all to invest in the next innovation wave.

Ryan, this is a very timely post summarizing the latest developments. I'm curious what your go-to sources are to keep up with relevant news, opinion pieces, while filtering out the noise/hype.

Additionally, in case you are looking for topics to write about for next week, two friendly suggestions are: 1) the implication of ChatGPT plug-ins, and 2) your elaboration on why and how a spray and pray strategy by angel investors "might actually be a valid one at this point".

On the first topic suggestion, Benn Stancil argued in his Mar 24 substack (https://benn.substack.com/p/the-public-imagination) that OpenAI could evolve into both an AWS and an App Store. It would be interesting to hear your thoughts on what the plug-in play means for start-ups, VCs, and big tech.